By LISA BURCH //

Loneliness is no longer a private struggle. It’s a public health crisis, showing up in hospitals, classrooms, workplaces and counseling offices across Long Island and the country.

Access to counseling services and the availability of Certified Community Behavioral Health Clinics are essential. However, despite mental health being a pillar of community awareness, few seek out services. Instead, more people are turning to artificial-intelligence-powered chatbots, attempting to fill companionship and therapy gaps.

Access to counseling services and the availability of Certified Community Behavioral Health Clinics are essential. However, despite mental health being a pillar of community awareness, few seek out services. Instead, more people are turning to artificial-intelligence-powered chatbots, attempting to fill companionship and therapy gaps.

With less constraints in affordability and accessibility, the increased use is understandable. The Harvard Business Review recently found that therapy and companionship were No. 1 on its list of public uses for generative AI and large-language models.

The rise of AI comes with very interesting possibilities, but an overreliance could be incredibly dangerous.

There is no question that AI has changed how people seek support. Artificial intelligence chatbots are available 24/7, speak in reassuring tones and never appear rushed or distracted. For someone feeling lonely, anxious or overwhelmed, at any hour, that accessibility can feel like relief.

Lisa Burch: Human condition.

But accessibility is not care and conversation is not therapy. At the EPIC Family mental-health clinics, we see every day what happens when people reach the edge of crisis.

On Long Island, the need for mental health and addiction services is not theoretical or emerging. It is urgent and ongoing. In Nassau County alone, our Mobile Crisis Unit – operated by our South Shore Guidance Center – responded to nearly 3,500 mental-health emergencies last year.

These were not moments that could be solved with reassuring language or reflective prompts. Lives were at risk. Make no mistake about it: There is a loneliness epidemic on Long Island, and we must be clear and honest about what AI can do about it – and not do.

Emerging evidence indicates that AI “companion” apps can reduce short-term loneliness or help sort out options using data. A Harvard Business School working paper examining AI companions across multiple studies found that interacting with an AI companion produced measurable, short-term reductions in loneliness – and that feeling “heard” was a key driver.

In other words, when people perceive responsiveness, validation and sustained attention, loneliness can ease.

People who feel isolated may withdraw, avoid outreach or struggle to find words for what they’re experiencing. If AI helps someone take one step toward reflection by naming emotions, tracking stressors or practicing coping skills, that can be meaningful.

But in July, OpenAI CEO Sam Altman warned ChatGPT users against using the chatbot as a “therapist” because of privacy concerns. Users have no legal guarantee to confidentiality when using ChatGPT, so any personal information is vulnerable to hackers and data brokers.

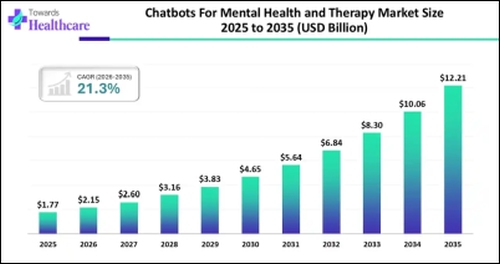

Artificial growth: The market size for therapeutical chatbots is expected to skyrocket over the next decade. (Source: www.towardshealthcare.com)

Therapy is more than supportive dialogue; it’s a safe and private space. It involves clinical judgment, ethical standards and accountability. This is especially true when people are at risk of harming themselves or others, experiencing psychosis, or navigating trauma.

A recent Stanford University AI study evaluated popular “therapy” chatbots against widely accepted therapeutic expectations such as avoiding stigma, responding safely to suicidality and not reinforcing delusions, and found significant problems.

The researchers reported that some chatbots showed higher stigma toward certain conditions (including schizophrenia and alcohol dependence) and, in testing, some provided unsafe responses that could empower self-harm.

Therapy is a relationship built on safety, responsibility and skilled intervention. If a system cannot reliably recognize crisis, challenge distorted thinking when needed and respond without bias, it should not be positioned.

Artificial intelligence can feel helpful because AI agents are programmed to optimize engagement by mirroring tone, affirming feelings and sustaining intimacy. The problem is that true therapeutic breakthroughs come from challenging thought, tackling hard realities and fortifying boundaries.

Sal Altman: ChatGPT is not a therapist.

In therapy, support and challenge coexist. A clinician may validate emotional pain while also positively confronting harmful beliefs or behaviors. Artificial intelligence that defaults to agreement can unintentionally reinforce rumination, dependence or distorted thinking – particularly for vulnerable users.

We must acknowledge that research suggests heavy emotional reliance on chatbots may, over time, correlate with greater loneliness and emotional dependency for some users. A recent report on OpenAI and MIT Media Lab research noted that heavy users who engaged in more emotionally expressive interactions showed higher loneliness and dependency indicators, though the direction of causality is not clear.

So, what can we do with AI in the mental-health-and-companionship space? A responsible approach would be to understand where AI can assist and where it should not be used.

Low-risk supports like journaling prompts, habit trackers and structured coping exercises may be appropriate when paired with clear disclaimers and pathways to human care. Of course, higher-risk scenarios like suicidality, psychosis, complex trauma, abuse, severe depression and substance dependence require human professionals and crisis-ready systems.

We should never lose sight of the fact that loneliness is not merely a lack of conversation, but a lack of belonging. Technology can help people practice reaching out, but it cannot replace the healing power of human relationships.

Tech will continue to evolve. Our responsibility is to ensure that innovation expands access to real support without creating new harm. An AI chatbot can talk like a friend – therapy still requires a human.

Lisa Burch is president and CEO of EPIC Family of Human Service Agencies.