By DAVID A. CHAUVIN //

Now that George Santos’ vacated Congressional seat has been filled and Long Island politics is out of the national spotlight, it’s time for Long Islanders to gear up for 2024’s major elections – but as they do, concerns about the impact of digital misinformation on democratic processes are reaching new heights.

In an era dominated by social media platforms, the use of deepfakes and the influence of artificial intelligence are emerging as significant challenges. Despite platforms’ promises to enhance security measures, recent incidents highlight the persistent misinformation issue, posing a serious risk to informed and fair voting outcomes.

In an era dominated by social media platforms, the use of deepfakes and the influence of artificial intelligence are emerging as significant challenges. Despite platforms’ promises to enhance security measures, recent incidents highlight the persistent misinformation issue, posing a serious risk to informed and fair voting outcomes.

What’s more, the use of AI-generated content and deepfakes has extended past the dark web and criminal underworld and become a prominent tool in political campaigns.

Ron DeSantis’ presidential campaign utilized AI-generated images to build a narrative among Republican voters about the close relationship between Donald Trump and former chief medical advisor Anthony Fauci. The post included six photos, three of which were real and three of which were fake.

In retrospect, AI-detection experts were able to flag aspects that made it clear they were fake – but those aspects are relatively unnoticeable to the untrained eye, and they’ll only get harder to spot as technology advances.

David Chauvin: Your eyes can deceive you.

This isn’t the only example of AI being used nefariously, of course, but it comes with an interesting wrinkle. When NPR reported on the move by the DeSantis campaign, the article included a quote from “a person with knowledge of the DeSantis operation,” who noted, “This was not an ad, it was a social media post.”

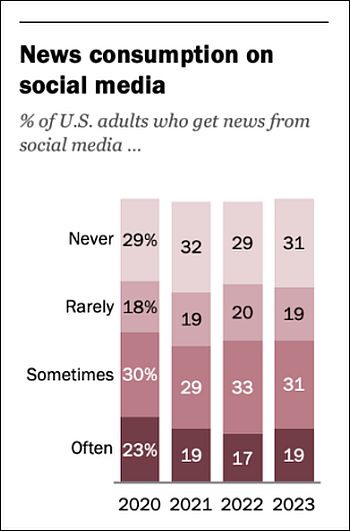

Blurring the lines between truth and reality is a dangerous game, and with 70 percent of Americans getting at least some of their information from X and Meta and the like, even more so: On social media, anything goes.

To address these issues, platforms are working to implement new AI disclosures. However, the effectiveness of such measures remains uncertain, especially considering the evolving nature of AI-generated content and the involvement of independent creators in spreading political messaging.

For example, Meta recently outlined plans to make political content opt-in by default across its apps – part of its efforts to step back from and deprioritize political content. Meta’s decision to distance itself from political discussions reflects a strategy to reduce exposure to potentially harmful content.

It’s easy to dismiss this as small potatoes or to say it’s the user’s fault for being duped. But it’s important to note that even a single image can have an impact – and even if it can be removed or debunked, ideas can become embedded, far beyond political campaigning.

Consider the source: Roughly 70 percent of Americans consume some “news” content via social media, where “truth” is relative. (Source: Pew Research Center)

An AI-generated photo of an explosion at the Pentagon spread so far throughout social media this past May that it caused a temporary dip in the stock market. Last month, AI-generated pornographic images of Taylor Swift went viral on X. They were quickly blocked, but not before they were shared more than 45 million times.

We’ve even seen school-aged children in New Jersey and Long Islanders without the celebrity profile falling victim to humiliation by generative AI.

Brands also face the risk of reputational damage and financial loss through sophisticated AI-driven scams and deepfakes, which can mimic corporate communications with alarming accuracy. In 2022, scammers joined a Zoom call of crypto developers and investors using a deepfake hologram that tricked them into thinking an official would meet with them and list their business creations, which could make them lots of money.

For individuals, especially creators and public figures, AI can fabricate content that misrepresents actions or statements, leading to personal and professional harm. When it comes to the average citizen, anyone who posts images publicly online is at risk of having a deepfake created and exposed somewhere.

That’s why it’s so important to monitor the information about you or your brand that’s in the public eye, and to carefully curate the narrative connected to your name or brand.

(Spoiler Alert) If the makers of Crock-Pot felt the urge to respond after an episode of “This is Us” because it tainted the product’s perception among potential customers, imagine what could happen if the only thing target audiences know about your brand is a deepfaked video.

The incidents involving AI-generated content affecting stock markets and personal reputations highlight the far-reaching consequences of unchecked technology. As we navigate this era of digital complexity, it’s imperative that we prioritize authenticity, critical thinking and informed engagement to safeguard the integrity of our democratic institutions – and our personal dignity.

In the face of advancing technology and its misuse, a joint effort by platforms, policymakers and the public is essential to uphold the values of truth and fairness in our society. We’ve had enough fake. It’s time for something more real.

David A. Chauvin is executive vice president of ZE Creative Communications.