By GREGORY ZELLER //

With so much concern over artificial intelligence-powered devices listening to us, are we paying enough attention to what AI is saying?

They certainly are at Stony Brook University, where scientists have completed a new study dissecting “social spambots” – artificial accounts designed to sound human and influence public debate, likely wreaking havoc on your social media platforms right now.

They certainly are at Stony Brook University, where scientists have completed a new study dissecting “social spambots” – artificial accounts designed to sound human and influence public debate, likely wreaking havoc on your social media platforms right now.

The study by SBU and University of Pennsylvania researchers, published recently in the scientific journal Findings of the Association for Computational Linguistics, applies cutting-edge machine-learning and natural language-processing algorithms to the social bots, ultimately estimating 17 attributes – including five personality traits (such as experience and neuroticism) and eight emotions (such as joy, anger and fear) – to determine how “human” they actually sound.

H. Andrew Schwartz: Nothing bot the truth.

It’s a fairly serious issue – other recent studies show social spambots not only influencing public opinion, but getting exponentially better at it.

In “Characterizing Social Spambots by their Human Traits,” senior author H. Andrew Schwartz, an associate professor in SBU’s Department of Computer Science, and his fellow researchers shed new light on the phenomenon, assessing the capabilities of current-generation bots – and revealing useful tells to help us not-so-helpless humans spot them.

“Imagine you’re trying to find spies in a crowd, all with very good but also very similar disguises,” Schwartz said. “Looking at each one individually, they look authentic and blend in … [but] when you zoom out and look at the entire crowd, they are obvious because the disguise is just so common.

“The way we interact with social media, we are not zoomed out – we just see a few messages at once,” he added. “This approach gives researchers and security analysts a big-picture view to better see the common disguise of the social bots.”

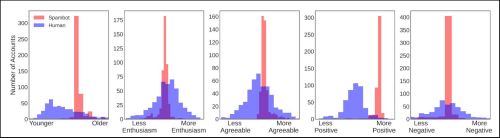

The study considered more than 3 million tweets authored by 3,000 bot accounts and 3,000 genuine human accounts. Based on the language of each tweet, researchers estimated the age, gender and “positive/negative sentiment” of each individual, along with those personality traits and emotions.

Individually, the bots appeared human, portraying what SBU called “reasonable values for their estimated demographics.” But viewed as a whole, the social bots looked more like clones of each other – more specifically, clones of a person in his or her late 20s with a “very positive language tone,” according to the university.

Individually, the bots appeared human, portraying what SBU called “reasonable values for their estimated demographics.” But viewed as a whole, the social bots looked more like clones of each other – more specifically, clones of a person in his or her late 20s with a “very positive language tone,” according to the university.

In fact, researchers discovered that the uniformity of the social bots’ scores on the 17 human traits was strong enough to trip “bot detectors” that might miss the AI moles in a crowd.

Typical bot detectors rely on a combination of factors – including the extent of a person/bot’s social network and images posted on the person/bot’s account – to make its determinations. But with accounts grouped solely on the results of the 17-trait analysis, standard detectors were able to pick out a cluster comprised entirely of bots.

Like a sore thumb: Social bots stick out, if you know what to look for.

Based on those results, SBU and UPenn scientists were able to create an “unsupervised bot detector with great accuracy,” Stony Brook said this week.

Salvatore Giorgi, a PhD student in UPenn’s School of Engineering and Applied Sciences and SBU visiting scholar, said the results portend an entirely new strategy for detecting bots infiltrating social media platforms – and stopping them from spreading bad information to unsuspecting users.

“If a Twitter user thinks an account is human, then they may be more likely to engage with that account,” noted Giorgi, listed as the study’s lead author. “Depending on the bot’s intent, the end result of this interaction could be innocuous, but it could also lead to engaging with potentially dangerous misinformation.”