By DAVID A. CHAUVIN //

Much has been made of the surface-level dangers of generative artificial intelligence: It writes like a robot, invents facts and struggles with nuance.

These are the quirks we laugh at, forward to coworkers, chalk up to growing pains. But as chatbots become permanent fixtures in how we work – writing emails, analyzing data, even brainstorming strategy – we need to look deeper.

These are the quirks we laugh at, forward to coworkers, chalk up to growing pains. But as chatbots become permanent fixtures in how we work – writing emails, analyzing data, even brainstorming strategy – we need to look deeper.

The real threat isn’t wonky grammar or hallucinated sources. It’s the risk that your company’s secrets could leak out.

In early 2023, Samsung engineers – trying to make their lives a little easier – used OpenAI’s ChatGPT to troubleshoot software bugs. In the process, they uploaded sensitive internal source code to a public-facing AI platform.

Once that information was entered, it was no longer just Samsung’s.

The company swiftly banned ChatGPT across its operations. Apple and other large corporations, fearing similar breaches, followed suit. The incident barely registered in public discourse, but it should’ve been a five-alarm fire.

David Chauvin: To bot, or not to bot.

We tend to anthropomorphize chatbots. Their tone is polite. They appear smart, responsive and eager to help. It’s easy to forget what’s happening in the background.

Every prompt entered is data. Every piece of data can be seen, stored, reviewed and potentially reused. Even the most advanced chatbots, like ChatGPT or Google Gemini, are not secure vaults – they’re black boxes powered by hungry machine-learning models, many of which use your inputs to improve future outputs.

Unless you’re using a specially designed enterprise version of a chatbot, something very few companies have, your data could be exposed through bugs, breaches or misuse.

This isn’t hypothetical. In March 2023, a ChatGPT glitch allowed users to see other people’s chat histories, including names, emails and parts of their payment information. It was patched quickly, but not before it made clear just how little control users have once their words are sent.

This is where the risk goes beyond bad information and becomes a cybersecurity and compliance issue.

Imagine an employee uploads a client contract for quick summarization, prompts proprietary financial projections for help with an analysis or simply drafts a sensitive email for review. Those inputs can live on in the model’s memory or be accessed by engineers during moderation. In worst-case scenarios, they could be scooped up in a future breach – or surface in another user’s results due to a bug.

Loose lips: The Ashley Madison data breach is a classic example of the importance of securing sensitive data.

Data breaches are far too common. And while the consequences may at times be amorphous – email addresses, birthdays, etc., which should still raise eyebrows – there are many examples of leaked content presenting real, tangible dangers.

The 2015 Ashley Madison data leak most explicitly comes to mind. Ashley Madison is an infamous dating site marketed to people seeking extramarital affairs; 10 years ago, hackers exposed the private information of more than 30 million users, who’d been sold on advanced confidentiality and privacy protection.

Real names, emails, financial transactions, even sexual preferences flew out the door. Careers were lost. Marriages collapsed. The Ashley Madison breach became a cautionary tale about the illusion of online privacy.

Nearly a decade later, many of us are making a similar mistake – this time with AI chatbots. And it’s not just Skynet doomsday scenarios or Issac Asimov fever dreams: Consider the New York Islanders’ efforts to implement AI into the fan experience, to inform fans about the ideal times to use the restrooms or get the freshest foods. Concession-stand pileups immediately followed.

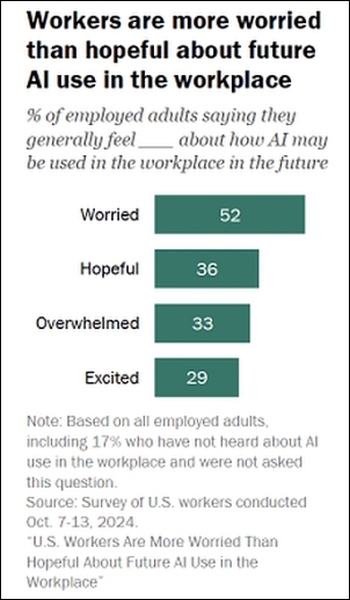

Works for them — or not: A majority of U.S. workers are already concerned about generative AI’s increasing role in the workplace. (Source: Pew Research Center)

It’s not just outbound risk, either. Inbound threats include generating compromised code or recommending AI-generated code that’s riddled with vulnerabilities, intentionally or unintentionally. The chatbot can offer programming suggestions that sound authoritative but can introduce major security gaps.

Enterprise technology analysts warn that these tools, while helpful, are not always trained to detect malicious patterns or account for secure coding practices. The technology is all so new that it’s almost impossible to fully understand the hidden risks or unintended consequences.

Few companies are fully prepared. But according to the Pew Research Center, one in five U.S. workers say they used ChatGPT in 2024, and the number is growing.

When companies first moved their data to the cloud, many didn’t understand where their responsibilities ended and the cloud provider’s began. As generative AI continues to proliferate, we’re on the cusp of a similar inflection point – except now, the burden of protection doesn’t fall on a centralized IT department.

It’s important to cultivate a healthy skepticism around these tools. Generative AI is powerful, but it’s not magic. It’s not a confidante. It’s not an analyst. It’s software – impressive, but imperfect. And if it’s not handled with care, it could become a ticking time bomb set to blow the lid off your company’s most sensitive information.

It’s easy to laugh at a chatbot that invents historical facts or calls a chicken a mammal. But when your unreleased earnings report or internal-strategy memo leaks out, it stops being funny – and starts to become a crisis.

David A. Chauvin is executive vice president of ZE Creative Communications.